Conjuring power from a theory of change: The PWRD method for trials with anticipated variation in effects

Timothy Lycurgus, Ben B. Hansen, and Mark White

Many efficacy trials are conducted only after careful vetting in national funding competitions. As part of these competitions, applications must justify the intervention’s theory of change: how and why do the desired improvements in outcomes occur? In scenarios with repeated measurements on participants, some of the measurements may be more likely to manifest a treatment effect than others; the theory of change may provide guidance as to which of those observations are most likely to be affected by the treatment.

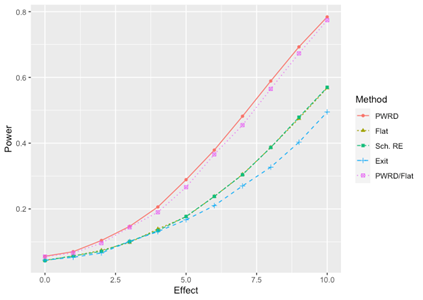

Figure 1: Power for the various methods across increasing effect sizes when the theory of change is correct.